Our Take

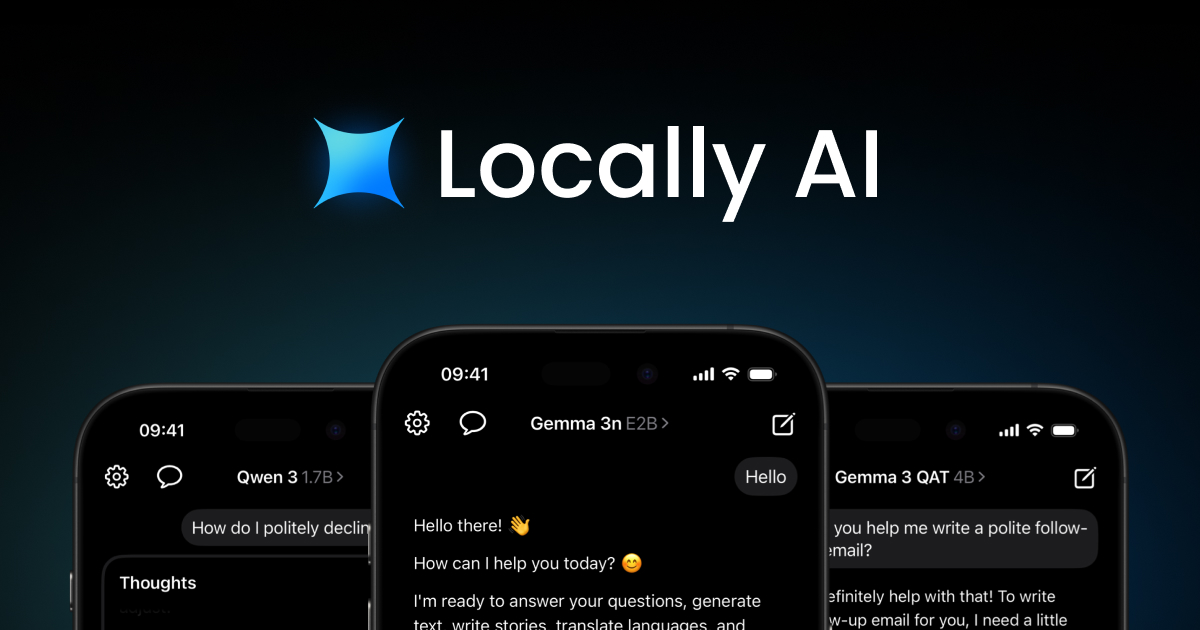

Adrien Grondin looked at the AI landscape and said "wait, why does everyone need to send their data to some server in California just to ask a question?" So he built Locally AI—an app that runs Qwen, Llama, Gemma, DeepSeek, and other open-source models directly on your iPhone, iPad, or Mac. No internet required. No login. No data leaves your device. Just you and the AI, having a private conversation on your own hardware.

That's the thing most people don't get about AI—every time you use ChatGPT or Claude, your prompts are traveling to some server, getting processed, and who knows what happens after that. Locally AI said "nah, we'll just use that M3 chip in your pocket." It runs completely offline, which means total privacy—your conversations never touch the cloud. They're now supporting Qwen 3 4B and the new Qwen 3 4B Thinking model, plus the entire open-source ecosystem: Meta's Llama, Google's Gemma, DeepSeek, IBM's Granite, and more. All optimized for Apple Silicon so it actually runs fast.

The public beta is live and they're onboarding users who care about privacy—or just don't want to depend on WiFi when they're offline. If you've ever wanted AI assistance on a flight, in a remote area, or just don't want corporations reading your prompts, this is your app.

The people behind Locally AI + Qwen

Adrien Grondin

profileLinks

Similar products worth knowing

Crowdcast 3.0

Run every type of event without switching tools

Avatar V by HeyGen

Free AI Video Generator: Create Stunning Videos with AI

Cardboard

Cursor for video editing.

Manus Skills

Package Manus workflows into reusable agent Skills

Want products like this in your inbox every morning?

Five products. Every morning. Written by someone who actually cares whether they're good or not. Free forever, unsubscribe whenever.