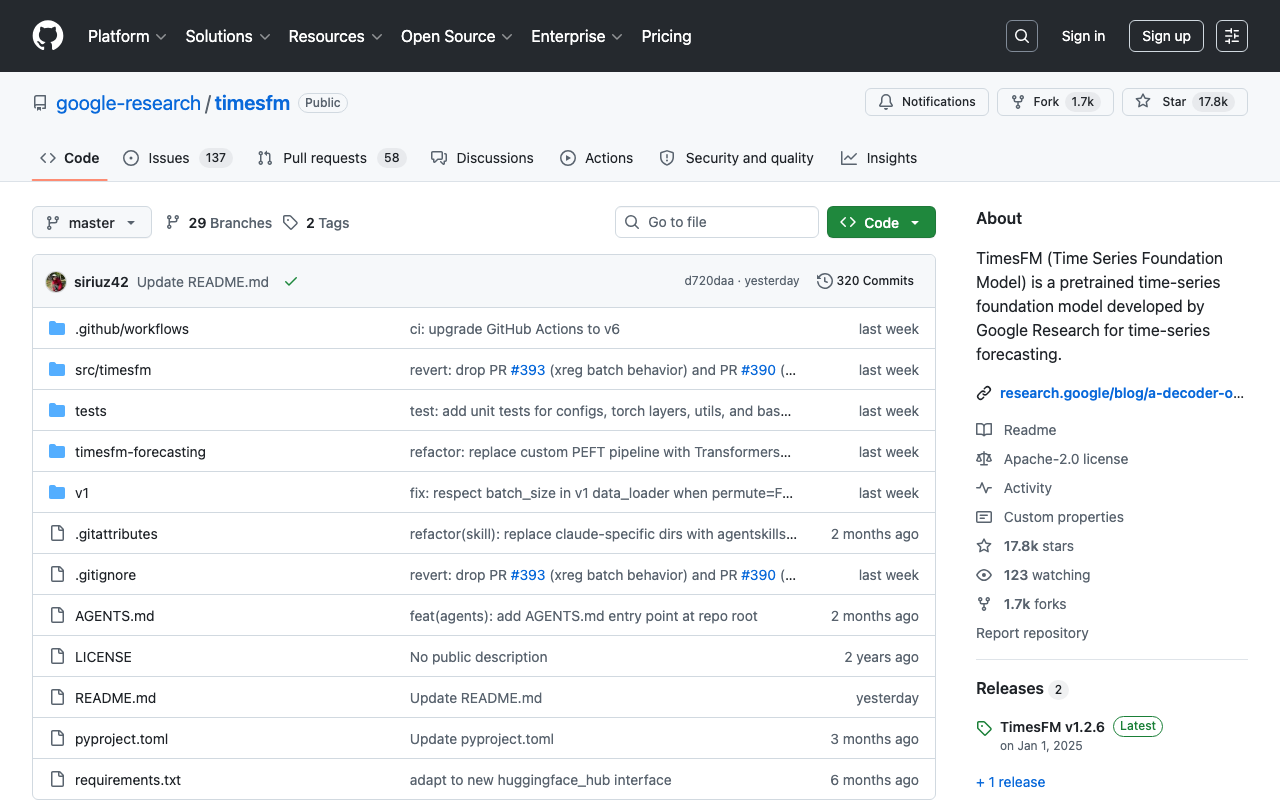

timesfm

A pretrained time-series foundation model developed by Google Research for time-series forecasting

Our Take

This is Google's time-series foundation model — 200M params, 16k context window, decoder-only architecture, free to use. It's got 17.5k stars which is real traction, and it's already baked into BigQuery ML and Google Sheets so enterprises can forecast without building from scratch. The ICML 2024 paper signals Google is actually serious about this space, not just experimenting. TimesFM 2.5 is the move if you're already living in Google's ecosystem and need time series forecasting without the headache.

Decoder-only foundation model for time-series forecasting that can analyze historical time series data and predict future values

Key Facts

The people behind timesfm

Links

Want products like this in your inbox every morning?

Five products. Every morning. Written by someone who actually cares whether they're good or not. Free forever, unsubscribe whenever.