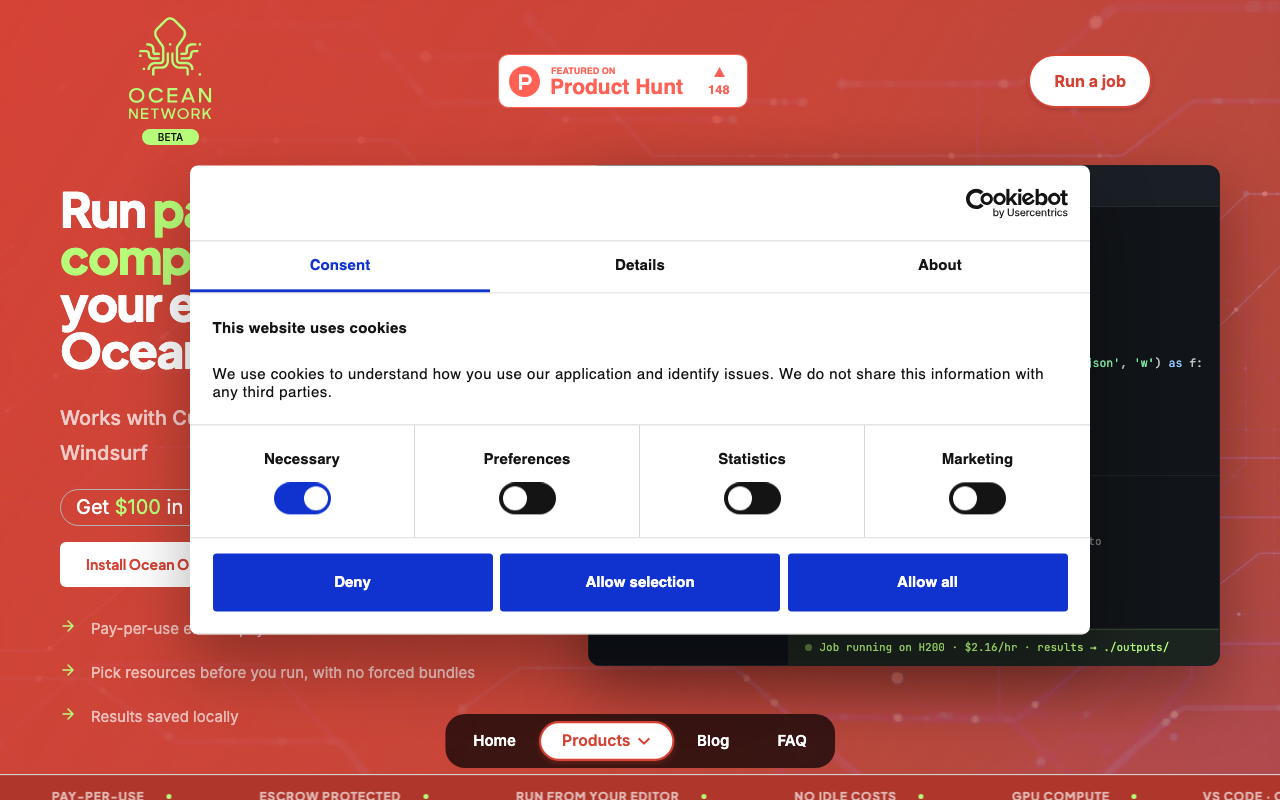

Ocean Orchestrator

Run AI jobs from your IDE with a one-click workflow.

Our Take

Sviatoslav Dvoretskii, Casiana Neagu, and Keshav Namdev looked at how developers actually run AI compute jobs today and realized it's still a disaster. You're writing code in Cursor or VS Code, then you have to SSH into some cloud instance, configure environments, manage SSH keys, monitor jobs through a separate dashboard, and hope your credit card doesn't get racked up by idle instances. It's 2024 and running a GPU job feels like flying a helicopter with a paper manual.

Ocean Orchestrator deletes that entire workflow. You pick your resources—H200 GPUs, 440GB RAM, 40 vCPUs—right from your IDE, hit one button, and your code runs on the Ocean Network. Results save locally. You pay $2.16/hr for H200 compute with escrow protection, no forced bundles, no idle costs. It works with Cursor, VS Code, Windsurf, and Antigravity. They’re giving $100 in complimentary credits to new users so you can test drive it without risking your own money.

This is the decentralized compute play that makes sense. Instead of begging AWS for instances and paying for time you're not using, Ocean Orchestrator connects you to a peer-to-peer compute marketplace where you pay for what you run. Developers get affordable GPU access, providers get paid for unused capacity. Everyone wins. They're based globally and currently hunting for more developers to try the beta and stress test the platform.

Key Facts

The people behind Ocean Orchestrator

Links

Similar products worth knowing

Want products like this in your inbox every morning?

Five products. Every morning. Written by someone who actually cares whether they're good or not. Free forever, unsubscribe whenever.